AI's next feat will be its descent from the cloud

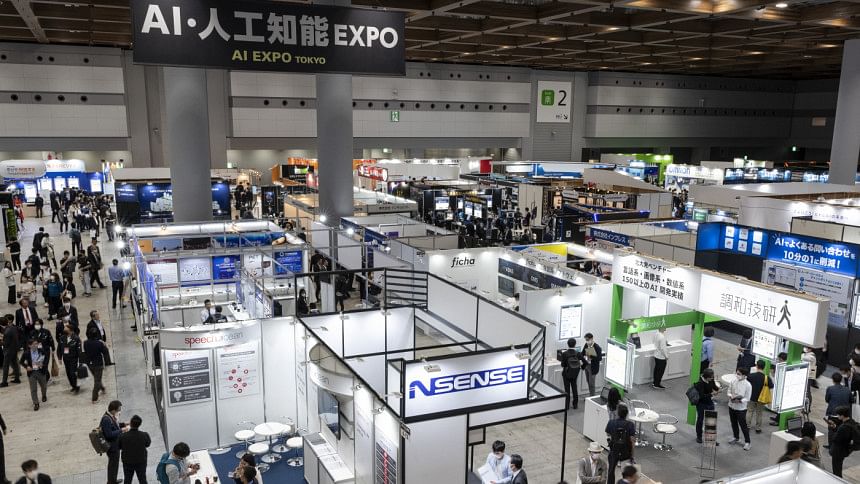

It's been two years since ChatGPT made its public debut, kicking off a rush to invest in generative artificial intelligence. The frenzy has lifted valuations for startups like OpenAI, inventor of the chatbot, as well as technology titans whose cloud computing platforms train and host the models that enable these services. The current boom is already showing signs of strain. AI's next phase of growth may be in the palm of your hand.

So-called generative AI, where a model creates new content based on the data it's trained on, today largely exists in the cloud. OpenAI, for example, uses Microsoft's Azure platform to train and run its large language models (LLMs). Anyone with an internet connection can make a query on ChatGPT using Azure's data centres around the world. But as models get larger and more complex, so does the infrastructure to train them and handle queries from users.

The result is a scramble to build bigger and more powerful data centres. OpenAI and Microsoft, for example, are in talks for a data centre project set to launch in 2028 that's projected to cost a whopping $100 billion, according to The Information.

All in all, Google owner Alphabet, Microsoft and Meta Platforms, which owns Instagram and Facebook, are forecast to spend a combined $160 billion in capital expenditures next year, per LSEG data, three-quarters more than in 2022. Most of that will go toward purchasing Nvidia's coveted $25,000 graphic processor units (GPU) and other related infrastructure to train models. The $3 trillion company's CEO Jensen Huang predicts investment in data centres will double to $2 trillion over the next four or five years.

These sums raise awkward questions about how sustainable this level of spending is, and whether chatbots and other applications can bring in enough revenue to generate a positive return on such staggering investments. Companies are also grappling with the challenge of finding land to house new data centres and the securing sufficient electricity supplies to power and cool the chips. Big Tech's dominance of LLMs and cloud computing is also attracting regulatory scrutiny. Last year, Microsoft, Amazon and Google accounted for 58 percent of global AI server procurement, Morgan Stanley analysts reckon.

These factors explain the latest tech buzzword: "edge AI". This phrase refers to algorithms and models that run on smartphones or personal computers at the edge of a network rather than a centralised server farm. This approach has several advantages over cloud-based AI. Users will get responses on their devices in real time, without the need for a high-speed internet connection. Their personal data would also stay on the device, rather than being transmitted to a server owned by a third party. And given the ubiquity of handsets and PCs, adoption could be rapid. Analysts at UBS reckon nearly 50 percent of smartphones, roughly 583 million units, will have generative AI capabilities by 2027, up from just 4 percent in 2023.

The biggest hurdle is technological: today's devices do not have the computing power, energy and memory bandwidth to run a large model such as OpenAI's GPT-4, which contains an estimated 1.8 trillion parameters. Even Facebook's relatively smaller LLAMA models, with 7 billion parameters, would require an additional 14 gigabytes of temporary storage to work on a phone. Apple's latest iPhone 16 only comes with 8GB of such random access memory (RAM).

Even so, there are reasons to be optimistic. Companies and developers are increasingly turning to smaller models which are customised for specific tasks. They require less data and effort to train - Google's self-described "lightweight" Gemma architecture contains as little as 2 billion parameters - and are typically open-source and free to use. And because of their highly-specialised nature, smaller models often outperform their larger and more generalised counterparts, with fewer errors.

Besides, most contemporary day-to-day use cases for AI, such as photo-editing tools and personal assistants, probably won't require large models. Some smartphones already boast live translation and real-time transcription functions. And it makes sense for cloud providers to shift basic AI functions to the edge, freeing up powerful data centres for more complex tasks.

At the same time, makers of semiconductors and other components are cramming more processing power and memory into a phone or PC. Research firm Yole Group forecasts, the proportion of smartphones that can support an LLM with 7 billion parameters will grow to 11 percent this year, up from 8 percent last year. Leading chipmakers such as Taiwan's TSMC and South Korea's Samsung Electronics and SK Hynix are pioneering new methods such as advanced packaging in semiconductors, whereby they stack multiple chips into one "chiplet". That allows them to build even more powerful processors without having to shrink chip circuitry in order to squeeze in more transistors. One former TSMC executive predicted that within a decade, this technology could lead to a "multichiplet" containing more than 1 trillion transistors.

For investors, edge AI has the potential to mint more winners. So far, shareholders have assumed that most of the gains from AI will accrue to the biggest tech firms with the deepest pockets, as well as Nvidia and a handful of startups. Yet AI tools could prompt consumers to upgrade to newer and more sophisticated smartphones and personal computers. UBS analysts forecast combined sales in the two markets will surpass $700 billion by 2027, up 14 percent from this year. Brands from Apple to Lenovo – as well as their suppliers - all stand to benefit.

In semiconductors, Nvidia's advanced GPUs will still dominate. But other chip firms like Qualcomm and MediaTek should also gain. The Taiwanese group is set to unveil its latest chipset that can support large models next month; executives expect revenue from its flagship mobile products can grow 50 percent this year.

As with the cloud-based variety, the success of edge AI will depend on coming up with compelling applications which users think are worth paying for. If that happens, the next big thing in AI will be found in smaller models and smaller devices.

For all latest news, follow The Daily Star's Google News channel.

For all latest news, follow The Daily Star's Google News channel.

Comments